詳細議程:https://www.hh-ri.com/forum/20220303.html

-講者:林盈達 Ying-Dar Lin, IEEE Fellow, IEEE Distinguished Lecturer Chair Professor of National Chiao Tung University, Hsinchu, TAIWAN

-Autobiography: Ying-Dar Lin is a Chair Professor of computer science at National Chiao Tung University (NCTU), Taiwan. He received his Ph.D. in computer science from the University of California at Los Angeles (UCLA) in 1993. He was a visiting scholar at Cisco Systems in San Jose during 2007–2008, CEO at Telecom Technology Center, Taiwan, during 2010-2011, and Vice President of National Applied Research Labs (NARLabs), Taiwan, during 2017-2018. He cofounded L7 Networks Inc. in 2002, later acquired by D-Link Corp. He also founded and directed Network Benchmarking Lab (NBL) from 2002, which reviewed network products with real traffic and automated tools, also an approved test lab of the Open Networking Foundation (ONF), and spun-off O’Prueba Inc. in 2018. His recent research interests include machine learning for cybersecurity, wireless communications, network softwarization, and mobile edge computing. His work on multi-hop cellular was the first along this line, and has been cited over 1000 times and standardized into IEEE 802.11s, IEEE 802.15.5, IEEE 802.16j, and 3GPP LTE-Advanced. He is an IEEE Fellow (class of 2013), IEEE Distinguished Lecturer (2014–2017), ONF Research Associate (2014-2018), and received K. T. Li Breakthrough Award in 2017 and Research Excellence Award in 2017 and 2020. He has served or is serving on the editorial boards of many IEEE journals and magazines, including Editor-in-Chief of IEEE Communications Surveys and Tutorials (COMST) with impact factor increased from 9.22 to 23.7 during his term (2017-2020). He published a textbook, Computer Networks: An Open Source Approach, with Ren-Hung Hwang and Fred Baker (McGraw-Hill, 2011).

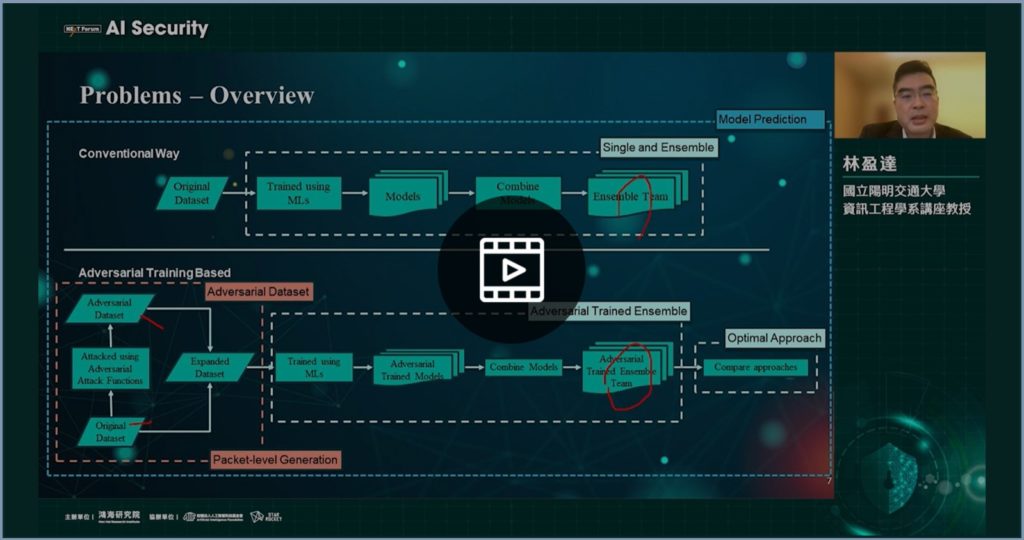

-Talk Title: EAT: Ensemble Adversarial Trained Machine Learning in Defending Against Evaded Intrusions

-Abstract: Network intrusion detection systems (NIDS) now adopt machine learning (ML) for better detection of wider attack variants. However, ML itself is also known vulnerable to adversarial attacks where some parameter values of the input or training data could be altered to degrade the ML accuracy. A number of defense strategies have been proposed but mostly in image recognition areas. In this work, we propose Ensemble Adversarial Trained (EAT) to train multiple models using adversarial attacked datasets and then to assemble them into a team. We compare four approaches: basic, ensembled, adversarial, and EAT. From the experiment results for the basic models, we attained an average of 93% for F1 score with a wide range (82.6-99.8%), but dropped to 29% when facing adversarial attacks, particularly dropped to 7% caused by the strongest attack which is Projected Gradient Descent (PGD). With ensemble, adversarial training and EAT, the average score is recovered to 80%, 88% and 91% respectively. Furthermore, cosine similarity predict the models and approach implemented behind the system with an accuracy of 99%, which means a black-box test could reveal the ML approach taken inside the box.